Tokenmaxxing: The struggle is real

Thursday rolls around…

… and I am now rationing my token spend like it’s the last granola bar on a backpacking trip.

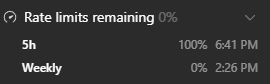

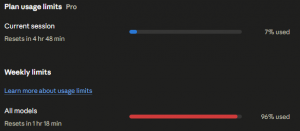

ChatGPT Codex ran out of tokens over 24 hours ago.

Claude is down to its last 3%.

One hour and 13 minutes can’t get here fast enough.

This is not coding anymore.

This is survival.

I am officially tokenmaxxing. (at a personal level)

I REALLY need to figure out a better way to optimize my use of models, and running local models to get more usage out of my frontier models for application development. I’m on sprint 5 of 8 sprints on this application. I’d really like to have it finished by the end of the weekend.

What Is Tokenmaxxing? Corporate vs Personal

Corporate tokenmaxxing: When organizations encourage, track, or celebrate heavy AI token usage as a signal of productivity or AI adoption.

At first, it sounds reasonable: use the tools, experiment aggressively, make AI part of the workflow.

Then someone creates a dashboard. Then a leaderboard. The goal stops being better work, and becomes higher token burn.

Because sometimes quantity has a quality all its own — especially when it fits nicely on a dashboard.

Personal Tokenmaxxing

Personal tokenmaxxing : When you are deep into AI-assisted development and suddenly realize your best coding partners are running out of tokens before your project is finished.

It starts as a joke.

Then it becomes a workflow problem.